31 KiB

+++ title="Confronting theoretical design with observed performances" date=2022-09-26 +++

During the past years, we have thought a lot about possible design decisions and their theoretical trade-offs for Garage. In particular, we pondered the impacts of data structures, networking methods, and scheduling algorithms. Garage worked well enough for our production cluster at Deuxfleurs, but we also knew that people started to experience some unexpected behaviors, which motivated us to start a round of benchmarks and performance measurements to see how Garage behaves compared to our expectations. This post presents some of our first results, which cover 3 aspects of performance: efficient I/O, "myriads of objects", and resiliency, reflecting the high-level properties we are seeking.

⚠️ Disclaimer

The results presented in this blog post must be taken with a (critical) grain of salt due to some limitations that are inherent to any benchmarking endeavor. We try to reference them as exhaustively as possible here, but other limitations might exist.

Most of our tests were made on simulated networks, which by definition cannot represent all the diversity of real networks (dynamic drop, jitter, latency, all of which could be correlated with throughput or any other external event). We also limited ourselves to very small workloads that are not representative of a production cluster. Furthermore, we only benchmarked some very specific aspects of Garage: our results are not an evaluation of the performance of Garage as a whole.

For some benchmarks, we used Minio as a reference. It must be noted that we did not try to optimize its configuration as we have done for Garage, and more generally, we have significantly less knowledge of Minio's internals compared to Garage, which could lead to underrated performance measurements for Minio. It must also be noted that Garage and Minio are systems with different feature sets. For instance, Minio supports erasure coding for higher data density and Garage doesn't, Minio implements way more S3 endpoints than Garage, etc. Such features necessarily have a cost that you must keep in mind when reading the plots we will present. You should consider Minio's results as a way to contextualize Garage's numbers, to justify that our improvements are not simply artificial in the light of existing object storage implementations.

The impact of the testing environment is also not evaluated (kernel patches, configuration, parameters, filesystem, hardware configuration, etc.). Some of these parameters could favor one configuration or software product over another. Especially, it must be noted that most of the tests were done on a consumer-grade PC with only a SSD, which is different from most production setups. Finally, our results are also provided without statistical tests to validate their significance, and might have insufficient ground to be claimed as reliable.

When reading this post, please keep in mind that we are not making any business or technical recommendations here, and this is not a scientific paper either; we only share bits of our development process as honestly as possible. Make your own tests if you need to take a decision, remember to read benchmarking crimes and to remain supportive and caring with your peers ;)

About our testing environment

We made a first batch of tests on Grid5000, a large-scale and flexible testbed for experiment-driven research in all areas of computer science, which has an open access program. During our tests, we used part of the following clusters: nova, paravance, and econome, to make a geo-distributed topology. We used the Grid5000 testbed only during our preliminary tests to identify issues when running Garage on many powerful servers. We then reproduced these issues in a controlled environment outside of Grid5000, so don't be surprised then if Grid5000 is not always mentioned on our plots.

To reproduce some environments locally, we have a small set of Python scripts

called mknet tailored to our

needs1. Most of the following tests were run locally with mknet on a

single computer: a Dell Inspiron 27" 7775 AIO, with a Ryzen 5 1400, 16GB of

RAM and a 512GB SSD. In terms of software, NixOS 22.05 with the 5.15.50 kernel is

used with an ext4 encrypted filesystem. The vm.dirty_background_ratio and

vm.dirty_ratio have been reduced to 2 and 1 respectively: with default

values, the system tends to freeze under heavy I/O load.

Efficient I/O

The main purpose of an object storage system is to store and retrieve objects

across the network, and the faster these two functions can be accomplished,

the more efficient the system as a whole will be. For this analysis, we focus on

2 aspects of performance. First, since many applications can start processing a file

before receiving it completely, we will evaluate the time-to-first-byte (TTFB)

on GetObject requests, i.e. the duration between the moment a request is sent

and the moment where the first bytes of the returned object are received by the client.

Second, we will evaluate generic throughput, to understand how well

Garage can leverage the underlying machine's performance.

Time-to-First-Byte - One specificity of Garage is that we implemented S3 web endpoints, with the idea to make it a platform of choice to publish static websites. When publishing a website, TTFB can be directly observed by the end user, as it will impact the perceived reactivity of the page being loaded.

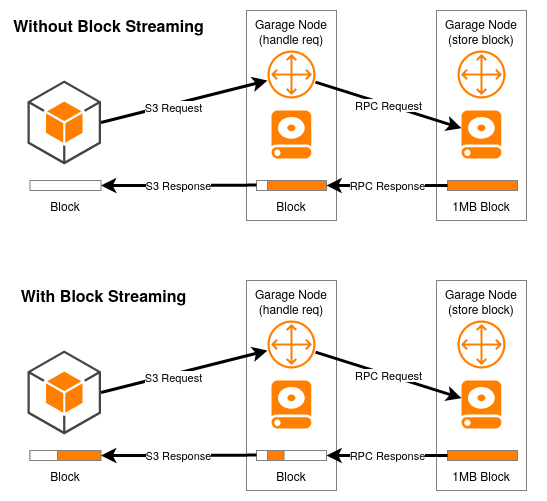

Up to version 0.7.3, time-to-first-byte on Garage used to be relatively high.

This can be explained by the fact that Garage was not able to handle data internally

at a smaller granularity level than entire data blocks, which are up to 1MB chunks of a given object

(a size which can be configured).

Let us take the example of a 4.5MB object, which Garage will split by default into four 1MB blocks and one 0.5MB block.

With the old design, when you were sending a GET

request, the first block had to be fully retrieved by the gateway node from the

storage node before it starts to send any data to the client.

With Garage v0.8, we added a data streaming logic that allows the gateway to send the beginning of a block without having to wait for the full block to be received from the storage node. We can visually represent the difference as follow:

As our default block size is only 1MB, the difference should be marginal on

fast networks: it takes only 8ms to transfer 1MB on a 1Gbps network,

adding at most 8ms of latency to a GetObject request (assuming no other

data transfer is happening in parallel). However,

on a very slow network, or a very congested link with many parallel requests

handled, the impact can be much more important: on a 5Mbps network, it takes at least 1.6 seconds

to transfer our 1MB block, and streaming will heavily improve user experience.

We wanted to see if this theory holds in practice: we simulated a low latency

but slow network using mknet and did some requests with block streaming (Garage v0.8 beta) and

without (Garage v0.7.3). We also added Minio as a reference. To

benchmark this behavior, we wrote a small test named

s3ttfb,

whose results are shown on the following figure:

Garage v0.7, which does not support block streaming, gives us a TTFB between 1.6s and 2s, which matches the time required to transfer the full block which we calculated above. On the other side of the plot, we can see Garage v0.8 with a very low TTFB thanks to the streaming feature (the lowest value is 43ms). Minio sits between the two Garage versions: we suppose that it does some form of batching, but smaller than our initial 1MB default.

Throughput - As soon as we publicly released Garage, people started

benchmarking it, comparing its performances to writing directly on the

filesystem, and observed that Garage was slower (eg.

#288). To improve the

situation, we did some optimizations, such as putting costly processing like hashing on a dedicated thread,

and many others

(#342,

#343), which led us to

version 0.8 "Beta 1". We also noticed that some of the logic we wrote

to better control resource usage

and detect errors, including semaphores and timeouts, was artificially limiting

performances. In another iteration, we made this logic less restrictive at the

cost of higher resource consumption under load

(#387), resulting in

version 0.8 "Beta 2". Finally, we currently do multiple fsync calls each time we

write a block. We know that this is expensive and did a test build without any

fsync call (see the

commit)

that will not be merged, only to assess the impact of fsync. We refer to it

as no-fsync in the following plot.

A note about fsync: for performance reasons, operating systems often do not

write directly to the disk when a process creates or updates a file in your

filesystem. Instead, the write is kept in memory, and flushed later in a batch

with other writes. If a power loss occurs before the OS has time to flush

data to disk, some writes will be lost. To ensure that a write is effectively

written to disk, the

fsync(2) system call must be used,

which effectively blocks until the file or directory on which it is called has been flushed from volatile

memory to the persistent storage device. Additionally, the exact semantic of

fsync differs from one OS to another

and, even on battle-tested software like Postgres, it was

"done wrong for 20 years".

Note that on Garage, we are still working on our fsync policy and thus, for

now, you should expect limited data durability in case of power loss, as we are

aware of some inconsistencies on this point (which we describe in the following

and plan to solve).

To assess performance improvements, we used the benchmark tool minio/warp in a non-standard configuration, adapted for small-scale tests, and we kept only the aggregated result named "cluster total". The goal of this experiment is to get an idea of the cluster performance with a standardized and mixed workload.

Minio, our reference point, gives us the best performances in this test. Looking at Garage, we observe that each improvement we made had a visible impact on performances. We also note that we have a progress margin in terms of performances compared to Minio: additional benchmarks, tests, and monitoring could help us better understand the remaining gap.

A myriad of objects

Object storage systems do not handle a single object but huge numbers of them: Amazon claims to handle trillions of objects on their platform, and Red Hat tout Ceph as being able to handle 10 billion objects. All these objects must be tracked efficiently in the system to be fetched, listed, removed, etc. In Garage, we use a "metadata engine" component to track them. For this analysis, we compare different metadata engines in Garage and see how well the best one scales to a million objects.

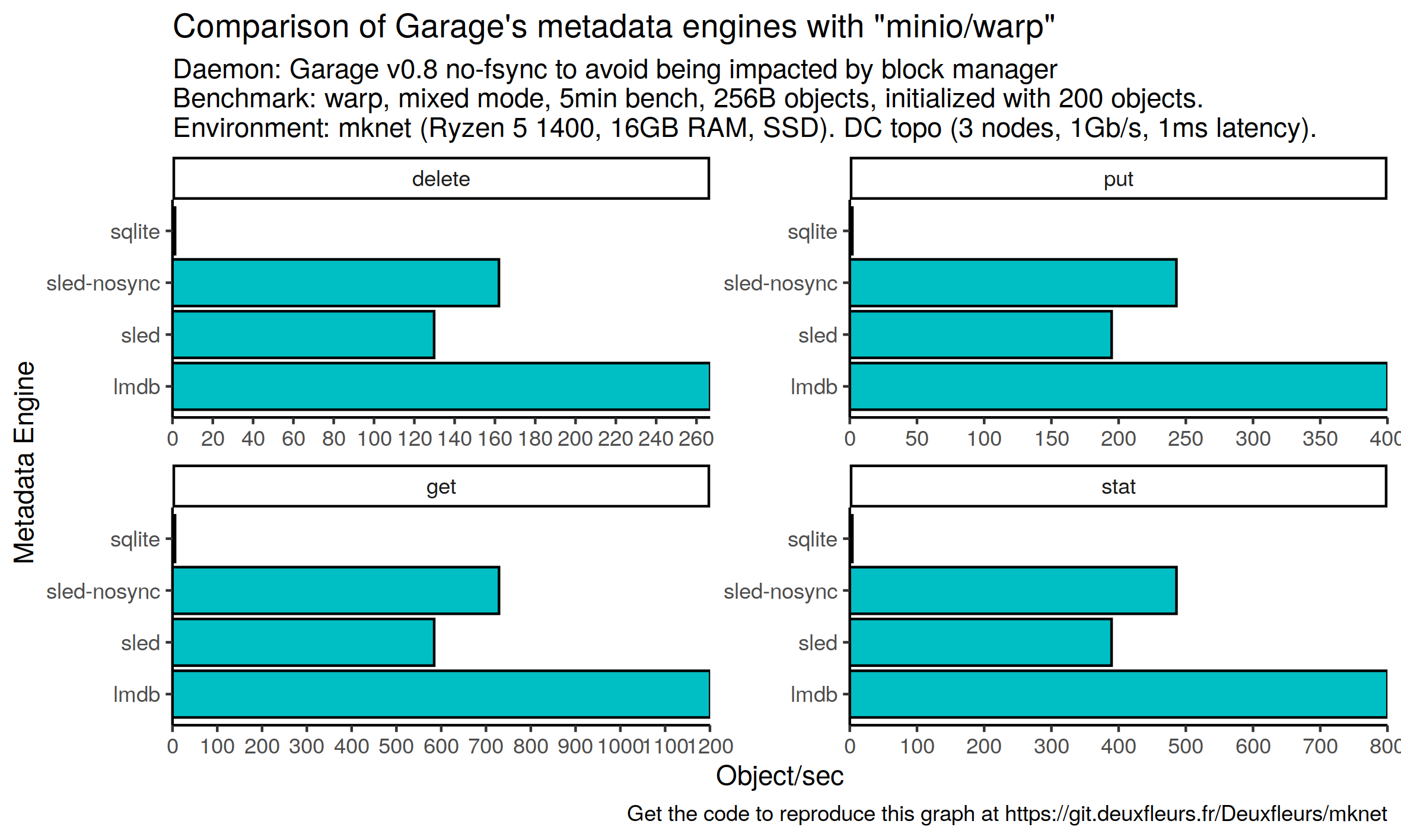

Testing metadata engines - With Garage, we chose not to store metadata directly on the filesystem, like Minio for example, but in a specialized on-disk B-Tree data structure; in other words, in an embedded database engine. Until now, the only supported option was sled, but we started having serious issues with it - and we were not alone (#284). With Garage v0.8, we introduce an abstraction semantic over the features we expect from our database, allowing us to switch from one metadata back-end to another without touching the rest of our codebase. We added two additional back-ends: LMDB (through heed) and SQLite (using Rusqlite). Keep in mind that they are both experimental: contrarily to sled, we have yet to run them in production for a significant time.

Similarly to the impact of fsync on block writing, each database engine we use

has its own fsync policy. Sled flushes its writes every 2 seconds by

default (this is

configurable).

LMDB default to an fsync on each write, which on early tests led to

abysmal performance. We thus added 2 flags,

MDB_NOSYNC

and

MDB_NOMETASYNC,

to deactivate fsync entirely. On SQLite, it is also possible to deactivate fsync with

pragma synchronous = off, but we have not started any optimization work on it yet:

our SQLite implementation currently still calls fsync for all write operations. Additionally, we are

using these engines through Rust bindings that do not support async Rust,

with which Garage is built, which has an impact on performance as well.

Our comparison will therefore not reflect the raw performances of

these database engines, but instead, our integration choices.

Still, we think it makes sense to evaluate our implementations in their current

state in Garage. We designed a benchmark that is intensive on the metadata part

of the software, i.e. handling large numbers of tiny files. We chose again

minio/warp as a benchmark tool, but we

configured it with the smallest possible object size it supported, 256

bytes, to put pressure on the metadata engine. We evaluated sled twice:

with its default configuration, and with a configuration where we set a flush

interval of 10 minutes (longer than the test) to disable fsync.

Note that S3 has not been designed for workloads that store huge numbers of small objects; a regular database, like Cassandra, would be more appropriate. This test has only been designed to stress our metadata engine and is not indicative of real-world performances.

Unsurprisingly, we observe abysmal performances with SQLite, as it is the engine we did not put work on yet,

and that still does an fsync for each write. Garage with the fsync-disabled LMDB backend performs twice better than

with sled in its default version and 60% better than the "no fsync" sled version in our

benchmark. Furthermore, and not depicted on these plots, LMDB uses way less

disk storage and RAM; we would like to quantify that in the future. As we are

only at the very beginning of our work on metadata engines, it is hard to draw

strong conclusions. Still, we can say that SQLite is not ready for production

workloads, and that LMDB looks very promising both in terms of performances and resource

usage, and is a very good candidate for being Garage's default metadata engine in

future releases, once we figure out the proper fsync tuning. In the future, we will need to define a data policy for Garage to help us

arbitrate between performance and durability.

To fsync or not to fsync? Performance is nothing without reliability, so we

need to better assess the impact of possibly losing a write after it has been validated.

Because Garage is a distributed system, even if a node loses its write due to a

power loss, it will fetch it back from the 2 other nodes that store it. But rare

situations can occur where 1 node is down and the 2 others validate the write and then

lose power before having time to flush to disk. What is our policy in this case? For storage durability,

we are already supposing that we never lose the storage of more than 2 nodes,

so should we also make the hypothesis that we won't lose power on more than 2 nodes at the same

time? What should we do about people hosting all of their nodes at the same

place without an uninterruptible power supply (UPS)? Historically, it seems that Minio developers also accepted

some compromises on this side

(#3536,

HN Discussion). Now, they seem to

use a combination of O_DSYNC and fdatasync(3p) - a derivative that ensures

only data and not metadata is persisted on disk - in combination with

O_DIRECT for direct I/O

(discussion,

example in Minio source).

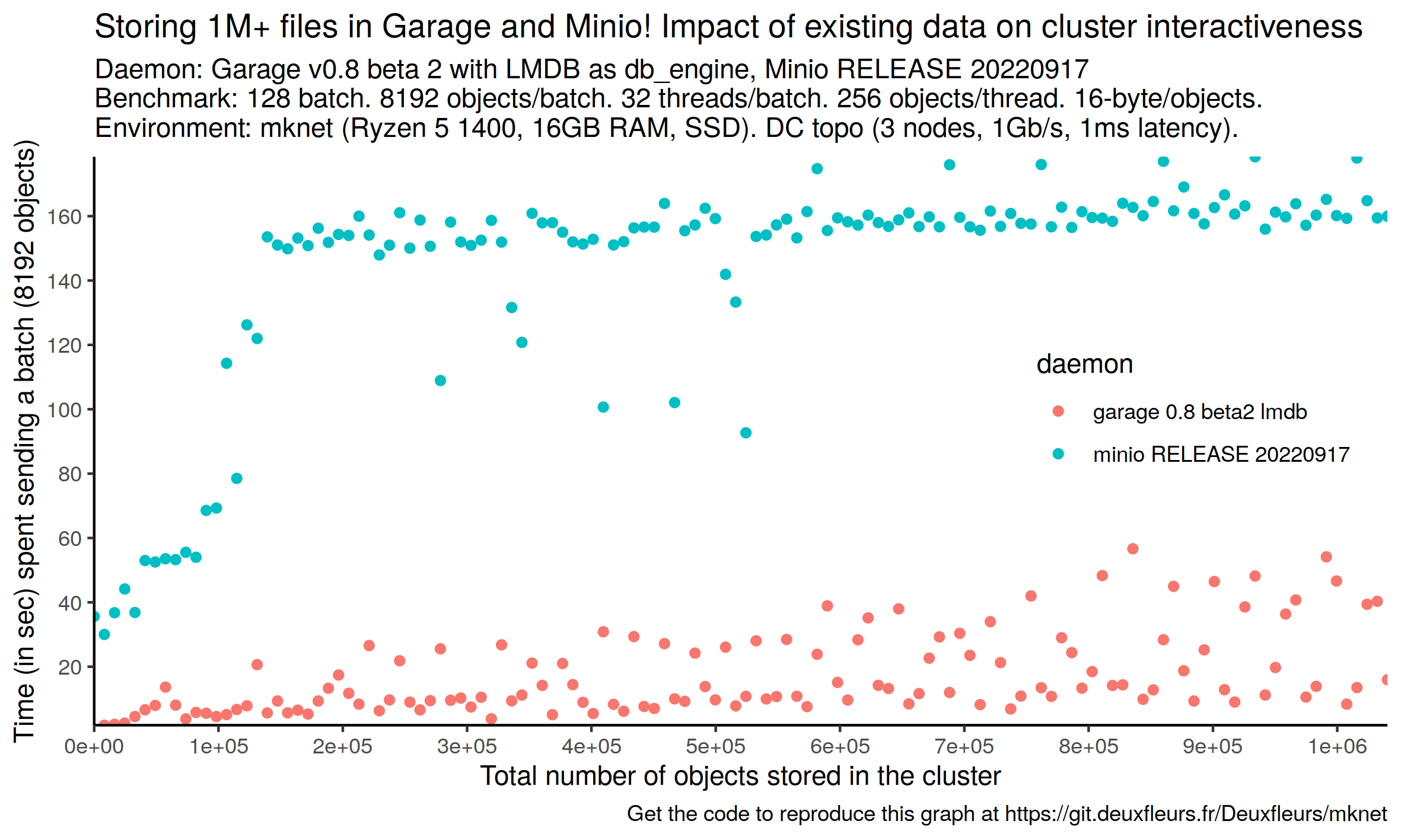

Storing a million objects - Object storage systems are designed not only for data durability and availability but also for scalability, so naturally, some people asked us how scalable Garage is. If giving a definitive answer to this question is out of the scope of this study, we wanted to be sure that our metadata engine would be able to scale to a million objects. To put this target in context, it remains small compared to other industrial solutions: Ceph claims to scale up to 10 billion objects, which is 4 orders of magnitude more than our current target. Of course, their benchmarking setup has nothing in common with ours, and their tests are way more exhaustive.

We wrote our own benchmarking tool for this test, s3billion2. The benchmark procedure consists in concurrently sending a defined number of tiny objects (8192 objects of 16 bytes by default) and measuring the wall clock time to the last object upload. This step is then repeated a given number of times (128 by default) to effectively create a target number of objects on the cluster (1M by default). On our local setup with 3 nodes, both Minio and Garage with LMDB were able to achieve this target. In the following plot, we show how much time it took Garage and Minio to handle each batch.

Before looking at the plot, you must keep in mind some important points regarding the internals of both Minio and Garage.

Minio has no metadata engine, it stores its objects directly on the filesystem.

Sending 1 million objects on Minio results in creating one million inodes on

the storage server in our current setup. So the performances of the filesystem

probably have a substantial impact on the observed results.

In our precise setup, we know that the

filesystem we used is not adapted at all for Minio (encryption layer, fixed

number of inodes, etc.). Additionally, we mentioned earlier that we deactivated

fsync for our metadata engine in Garage, whereas Minio has some fsync logic here slowing down the

creation of objects. Finally, object storage is designed for big objects, for which the

costs measured here are negligible. In the end, again, we use Minio only as a

reference point to understand what performance budget we have for each part of our

software.

Conversely, Garage has an optimization for small objects. Below 3KB, a separate file is not created on the filesystem but the object is directly stored inline in the metadata engine. In the future, we plan to evaluate how Garage behaves at scale with objects above 3KB, which we expect to be way closer to Minio, as it will have to create at least one inode per object. For now, we limit ourselves to evaluating our metadata engine and focus only on 16-byte objects.

It appears that the performances of our metadata engine are acceptable, as we have a comfortable margin compared to Minio (Minio is between 3x and 4x times slower per batch). We also note that, past the 200k objects mark, Minio's time to complete a batch of inserts is constant, while on Garage it still increases on the observed range. It could be interesting to know if Garage's batch completion time would cross Minio's one for a very large number of objects. If we reason per object, both Minio's and Garage's performances remain very good: it takes respectively around 20ms and 5ms to create an object. In a real-world scenario, at 100 Mbps, the upload of a 10MB file takes 800ms, and goes up to 8sec for a 100MB file: in both cases handling the object metadata would be only a fraction of the upload time. The only cases where a difference would be noticeable would be when uploading a lot of very small files at once, which again would be an unusual usage of the S3 API.

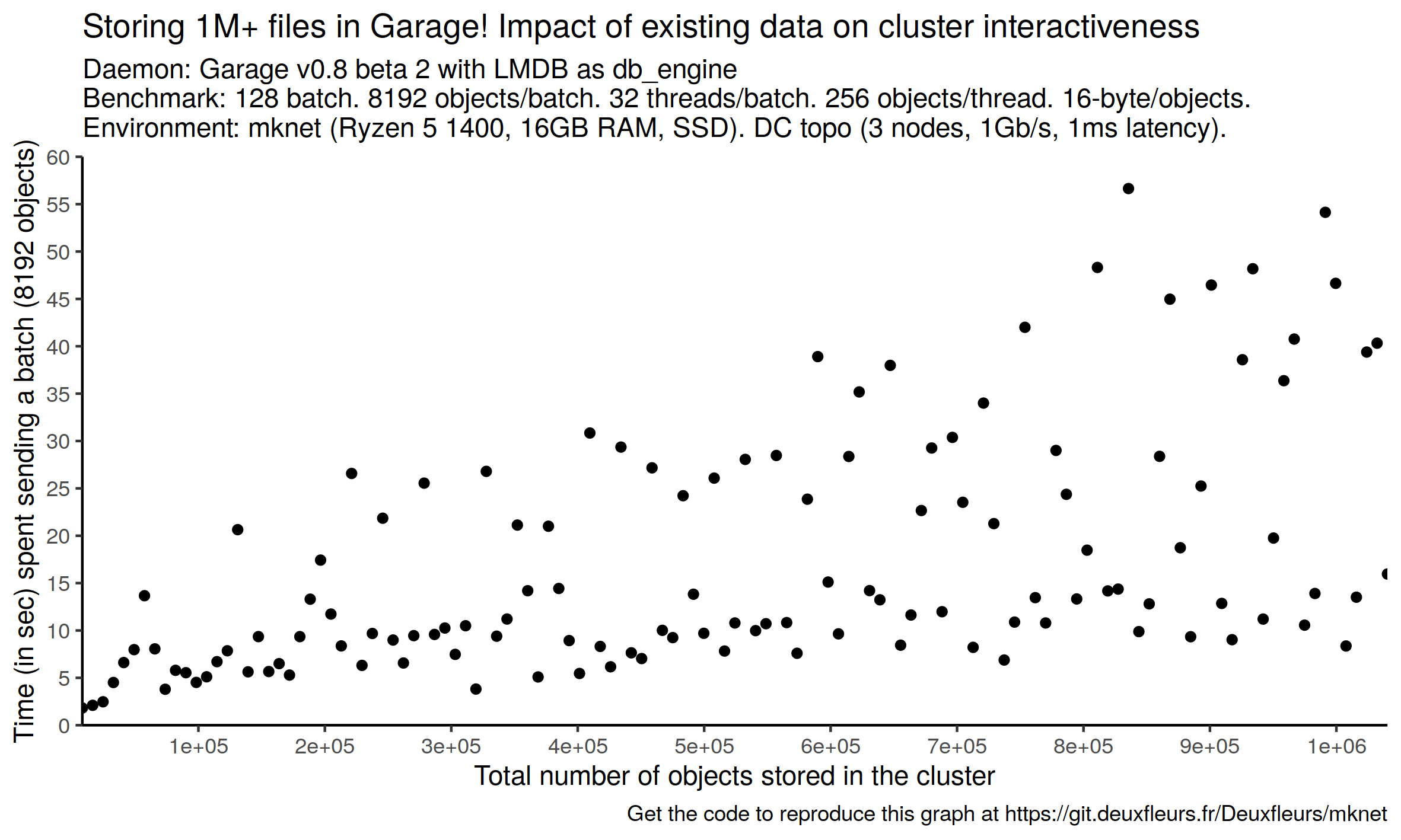

Let us now focus on Garage's metrics only to better see its specific behavior:

Two effects are now more visible: 1., batch completion time increases with the number of objects in the bucket and 2., measurements are scattered, at least more than for Minio. We expected this batch completion time increase to be logarithmic, but we don't have enough data points to conclude confidently it is the case: additional measurements are needed. Concerning the observed instability, it could be a symptom of what we saw with some other experiments on this setup, which sometimes freezes under heavy I/O load. Such freezes could lead to request timeouts and failures. If this occurs on our testing computer, it might occur on other servers as well: it would be interesting to better understand this issue, document how to avoid it, and potentially change how we handle I/O internally in Garage. But still, this was a very heavy test that will probably not be encountered in many setups: we were adding 273 objects per second for 30 minutes straight!

To conclude this part, Garage can ingest 1 million tiny objects while remaining usable on our local setup. To put this result in perspective, our production cluster at deuxfleurs.fr smoothly manages a bucket with 116k objects. This bucket contains real-world production data: it is used by our Matrix instance to store people's media files (profile pictures, shared pictures, videos, audio files, documents...). Thanks to this benchmark, we have identified two points of vigilance: the increase of batch insert time with the number of existing objects in the cluster in the observed range, and the volatility in our measured data that could be a symptom of our system freezing under the load. Despite these two points, we are confident that Garage could scale way above 1M objects, although that remains to be proven.

In an unpredictable world, stay resilient

Supporting a variety of real-world networks and computers, especially ones that were not designed for software-defined storage or even for server purposes, is the core value proposition of Garage. For example, our production cluster is hosted on refurbished Lenovo Thinkcentre Tiny desktop computers behind consumer-grade fiber links across France and Belgium (if you are reading this, congratulation, you fetched this webpage from it!). That's why we are very careful that our internal protocol (referred to as "RPC protocol" in our documentation) remains as lightweight as possible. For this analysis, we quantify how network latency and number of nodes in the cluster impact the duration of the most important kinds of S3 requests.

Latency amplification - With the kind of networks we use (consumer-grade fiber links across the EU), the observed latency between nodes is in the 50ms range. When latency is not negligible, you will observe that request completion time is a factor of the observed latency. That's to be expected: in many cases, the node of the cluster you are contacting cannot directly answer your request, and has to reach other nodes of the cluster to get the data. Each of these sequential remote procedure calls - or RPCs - adds to the final S3 request duration, which can quickly become expensive. This ratio between request duration and network latency is what we refer to as latency amplification.

For example, on Garage, a GetObject request does two sequential calls: first,

it fetches the descriptor of the requested object from the metadata engine, which contains a reference

to the first block of data, and then only in a second step it can start retrieving data blocks

from storage nodes. We can therefore expect that the

request duration of a small GetObject request will be close to twice the

network latency.

We tested the latency amplification theory with another benchmark of our own named s3lat which does a single request at a time on an endpoint and measures the response time. As we are not interested in bandwidth but latency, all our requests involving objects are made on tiny files of around 16 bytes. Our benchmark tests 5 standard endpoints of the S3 API: ListBuckets, ListObjects, PutObject, GetObject and RemoveObject. Here are the results:

As Garage has been optimized for this use case from the very beginning, we don't see any significant evolution from one version to another (Garage v0.7.3 and Garage v0.8.0 Beta 1 here). Compared to Minio, these values are either similar (for ListObjects and ListBuckets) or significantly better (for GetObject, PutObject, and RemoveObject). This can be easily explained by the fact that Minio has not been designed with environments with high latencies in mind. Instead, it is expected to run on clusters that are built in a singe data center. In a multi-DC setup, different clusters could then possibly be interconnected with their asynchronous bucket replication feature.

Minio also has a multi-site active-active replication system but it is even more sensitive to latency: "Multi-site replication has increased latency sensitivity, as Minio does not consider an object as replicated until it has synchronized to all configured remote targets. Replication latency is therefore dictated by the slowest link in the replication mesh."

A cluster with many nodes - Whether you already have many compute nodes with unused storage, need to store a lot of data, or are experimenting with unusual system architectures, you might be interested in deploying over a hundred Garage nodes. However, in some distributed systems, the number of nodes in the cluster will have an impact on performance. Theoretically, our protocol, which is inspired by distributed hash tables (DHT), should scale fairly well, but until now, we never took the time to test it with a hundred nodes or more.

This test was run directly on Grid5000 with 6 physical servers spread in 3 locations in France: Lyon, Rennes, and Nantes. On each server, we ran up to 65 instances of Garage simultaneously, for a total of 390 nodes. The network between physical servers is the dedicated network provided by the Grid5000 community. Nodes on the same physical machine communicate directly through the Linux network stack without any limitation. We are aware that this is a weakness of this test, but we still think that this test can be relevant as, at each step in the test, each instance of Garage has 83% (5/6) of its connections that are made over a real network. To measure performances for each cluster size, we used s3lat again:

Up to 250 nodes, we observed response times that remain constant. After this threshold, results become very noisy. By looking at the server resource usage, we saw that their load started to become non-negligible: it seems that we are not hitting a limit on the protocol side, but have simply exhausted the resource of our testing nodes. In the future, we would like to run this experiment again, but on many more physical nodes, to confirm our hypothesis. For now, we are confident that a Garage cluster with 100+ nodes should work.

Conclusion and Future work

During this work, we identified some sensitive points on Garage,

on which we will have to continue working: our data durability target and interaction with the

filesystem (O_DSYNC, fsync, O_DIRECT, etc.) is not yet homogeneous across

our components; our new metadata engines (LMDB, SQLite) still need some testing

and tuning; and we know that raw I/O performances (GetObject and PutObject for large objects) have a small

improvement margin.

At the same time, Garage has never been in better shape: its next version (version 0.8) will see drastic improvements in terms of performance and reliability. We are confident that Garage is already able to cover a wide range of deployment needs, up to over a hundred nodes and millions of objects.

In the future, on the performance aspect, we would like to evaluate the impact of introducing an SRPT scheduler (#361), define a data durability policy and implement it, make a deeper and larger review of the state of the art (Minio, Ceph, Swift, OpenIO, Riak CS, SeaweedFS, etc.) to learn from them and, lastly, benchmark Garage at scale with possibly multiple terabytes of data and billions of objects on long-lasting experiments.

In the meantime, stay tuned: we have released a first release candidate for Garage v0.8, and are already working on several features for the next version. For instance, we are working on a new layout that will have enhanced optimality properties, as well as a theoretical proof of correctness (#296). We are also working on a Python SDK for Garage's administration API (#379), and we will soon officially introduce a new API (as a technical preview) named K2V (see K2V on our doc for a primer).

Notes

-

Yes, we are aware of Jepsen's existence. Jepsen is far more complex than our set of scripts, but it is also way more versatile. ↩︎

-

The program name contains the word "billion", although we only tested Garage up to 1 million objects: this is not a typo, we were just a little bit too enthusiastic when we wrote it ;) ↩︎